Agents are only as good as their context

The uncomfortable truth about agentic AI is that many failures are not model failures. They are context failures. The agent has reasoning ability, tool access, and a fluent interface, but it is forced to operate inside a broken map of the organization.

The relevant knowledge is scattered across Slack threads, CRM records, support tickets, policy documents, email decisions, old PR discussions, dashboards, customer notes, and private judgment calls that never became structured data. When an AI system has to make a business decision from that mess, it does what people do under pressure: it guesses.

This is why enterprise AI needs a memory layer. Not a bigger prompt. Not a longer chat history. A real structure for connecting people, systems, decisions, policies, events, documents, and the reasons previous actions were taken.

The enterprise is a graph already

Organizations do not operate like isolated rows in a database. A customer belongs to an account. An account has contracts, tickets, renewals, risk flags, approvals, product usage, and relationships with people inside and outside the company. A decision is connected to a policy, a person, a constraint, a precedent, and a moment in time.

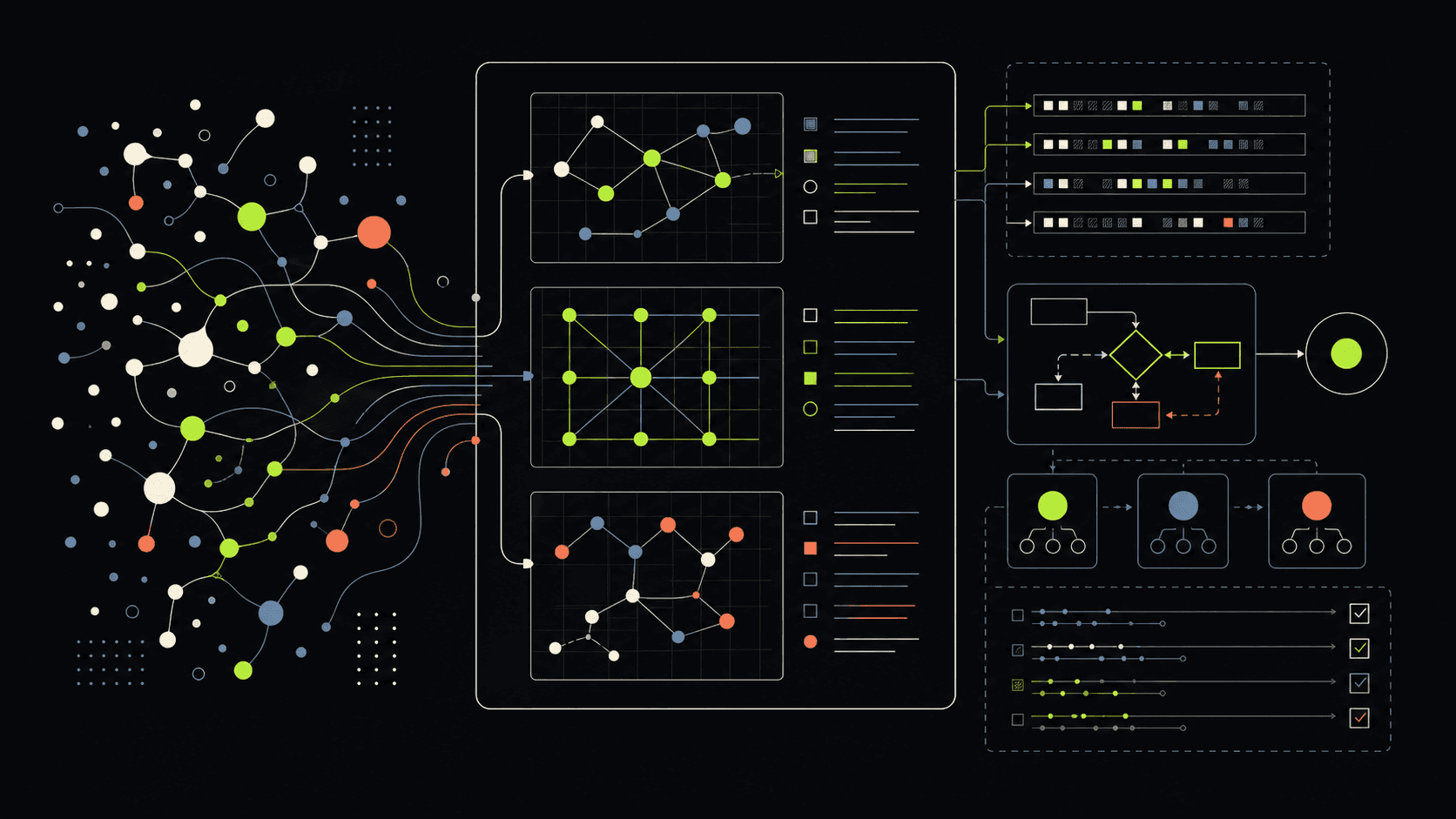

That shape is a graph. Nodes are entities: people, companies, systems, assets, documents, incidents, transactions, approvals, products, teams, workflows. Edges are relationships: owns, depends on, approved by, blocked by, escalated from, referenced in, similar to, changed after.

When this structure is explicit, an AI system can navigate context the way a capable operator does. It can move from a support ticket to the account history, from the account to the risk policy, from the policy to a previous exception, and from the exception to the reasoning that made it acceptable.

Retrieval is not enough

Vector retrieval is powerful, but similarity is not the same as relevance. A search result can be semantically close and still miss the decisive relationship. The paragraph that sounds similar may not be the policy that applies. The customer note that looks relevant may not reflect the latest approval. The previous case may be useful only because it shares a hidden dependency.

Graph retrieval changes the question. Instead of asking only, 'Which chunks sound like this question?', the system can ask, 'Which entities, relationships, decisions, and constraints surround this question?' That makes retrieval less like rummaging through a folder and more like navigating an operating model.

The practical benefit is grounding. A model can still use language, reasoning, and creativity, but its answer is pulled through a map of what the organization actually knows and how that knowledge is connected.

Memory has more than one timescale

An agentic system needs short-term memory: the current conversation, task state, tool calls, open questions, and intermediate decisions. This is the working memory of the system, and it matters because multi-step work breaks when the agent loses track of what just happened.

It also needs long-term memory: the durable domain model of customers, assets, policies, workflows, past decisions, incidents, exceptions, and institutional knowledge. This is where the system stops behaving like a helpful intern and starts behaving like software that understands the business terrain.

The most overlooked layer is reasoning memory. The system should not only store what was decided. It should store why. Which evidence was used? Which tool calls were made? Which policy applied? Which risk was accepted? Which alternative was rejected? Without reasoning traces, the enterprise gets outputs but not accountability.

Context graphs make agent work auditable

Audit logs tell you that something happened. Context graphs can show why it happened. That distinction matters when agents move from convenience tasks into operational decisions.

If an AI assistant recommends rejecting a loan, escalating a support account, approving a maintenance action, changing a production schedule, or flagging a compliance issue, the organization needs more than a polished paragraph. It needs provenance. It needs to know what facts were used, what relationships were traversed, and what precedent shaped the recommendation.

This is where graph-based memory becomes infrastructure. The same structure that helps the agent reason also helps humans inspect, debug, challenge, and improve the system.

The agent loop becomes a learning loop

A strong context graph is not static documentation. It is a loop. The agent retrieves context from the graph, uses tools, reasons through the task, produces an output, and then writes useful traces back into memory.

Over time, the graph becomes richer. It accumulates successful decisions, failed decisions, edge cases, human overrides, customer-specific constraints, domain patterns, and workflow knowledge that would otherwise disappear into chat history.

This is the difference between an AI feature and an AI organization. The feature answers a question. The organization gets better at answering the next question because it remembers what happened and why.

Context graphs belong in real operating arenas

In industrial intelligence, a context graph can connect machines, inspections, sensor events, maintenance history, operators, safety procedures, and production constraints. The agent can reason not only about an anomaly, but about what that anomaly means for the asset, line, shift, and risk posture.

In media intelligence, the graph can connect footage, transcripts, rights, people, locations, storylines, edits, production schedules, and editorial decisions. Search becomes less about finding a clip and more about understanding how an archive participates in production work.

In enterprise labs, the graph can connect experiments, sponsors, users, datasets, governance constraints, pilot outcomes, and platform decisions. That gives innovation teams a way to preserve learning across prototypes instead of restarting from zero every quarter.

The real product is connected judgment

The promise of context graphs is not that every company needs another database. The promise is that agentic systems need a way to preserve connected judgment.

The model brings language and reasoning. The graph brings structure, memory, relationships, and provenance. Together, they create a system that can retrieve the right context, explain the path it took, learn from previous work, and give humans something they can inspect.

For Mutant Company, this is central to how enterprise AI should be built. The future is not a collection of disconnected copilots. It is intelligence wired into the actual shape of the organization: its tools, assets, workflows, decisions, and memory.