The model is not where vertical AI is won

Frontier models are already good enough to make many vertical AI products possible. The harder question is whether an organization can teach those models what good looks like inside a specific workflow.

That is the last-mile problem. A product can look impressive in a demo and still fail when it meets the edge cases, judgment calls, habits, constraints, and invisible standards of a real domain.

In vertical AI, the winning system is often not the most sophisticated model pipeline. It is the organization that can continuously absorb domain insight and turn it into product behavior.

Domain expertise is a product function

Domain expertise is not decoration. It is not a quote in a sales deck or a review call at the end of a sprint. If the product automates expert work, the expert judgment has to be part of how the product is built, measured, and improved.

Sometimes that expertise is formal: a clinician, lawyer, underwriter, engineer, producer, operator, or compliance specialist. Sometimes it is informal: the person who has spent years inside the workflow and knows where the real decisions happen.

The key is not just hiring an expert. The key is designing the organization so that expertise has a path into the product.

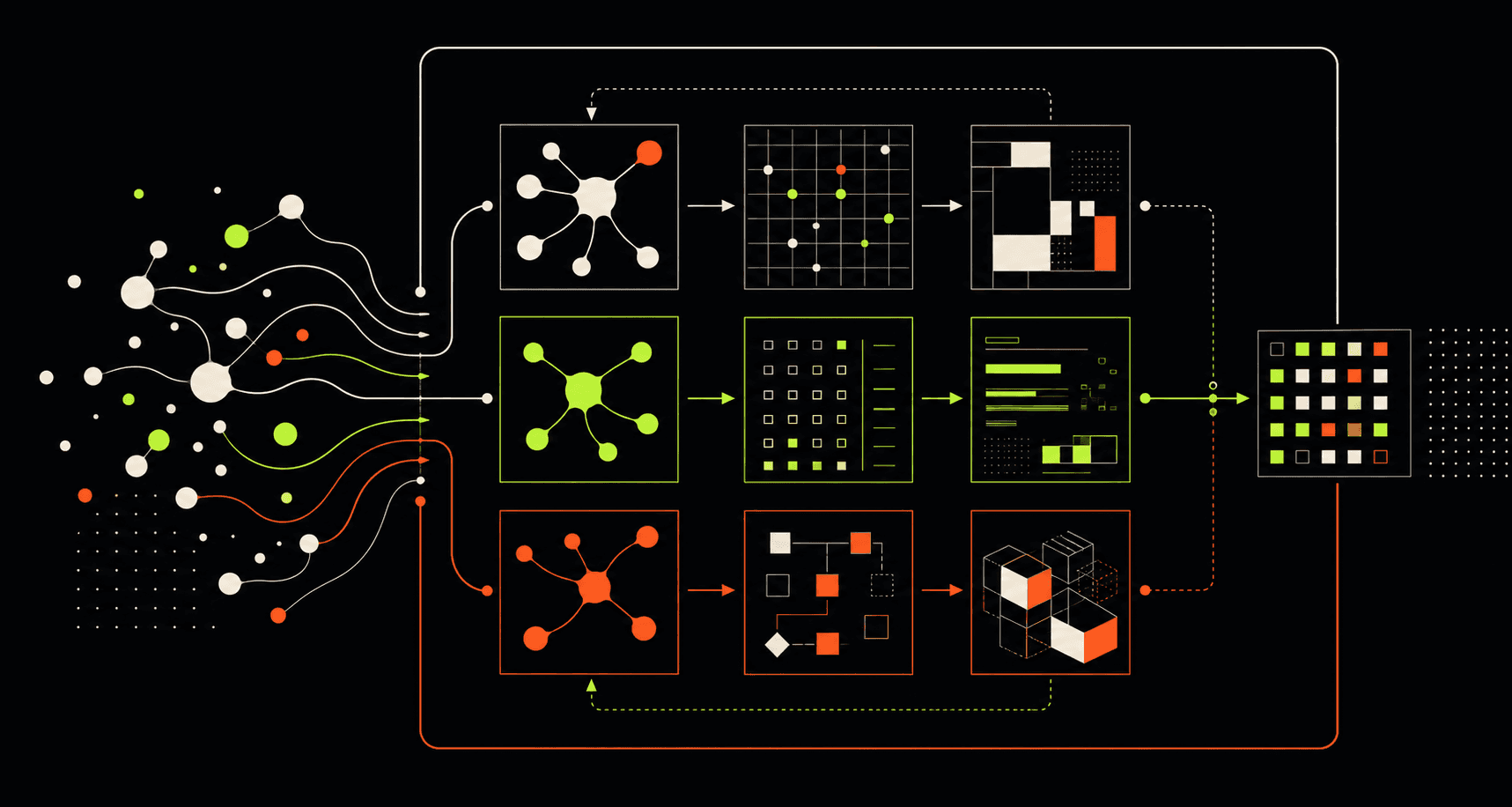

Three roles: oracle, evaluator, architect

The oracle directly improves the product. They inspect outputs, notice failure modes, adjust prompts, refine instructions, add context, and make taste or judgment calls. This works especially well early, when the product is small enough for one expert to hold the quality bar in their head.

The evaluator defines quality. They decide what should be measured, how reviews should happen, which failures matter, and what evidence engineers should use to improve the system. This becomes necessary when the product has scale, variation, or customer-specific nuance.

The architect designs the system that learns. They create feedback loops, review pipelines, user signals, automated improvement mechanisms, and organizational infrastructure so the product can keep absorbing domain knowledge without every improvement depending on manual intervention.

The role should evolve with scale

Many strong AI products start with an oracle. One principal domain expert looks at the outputs, owns the quality bar, and makes direct improvements. This is fast, opinionated, and useful while the surface area is still manageable.

As variation grows, the organization needs evaluation infrastructure. Different customers, geographies, specialties, workflows, and policies create quality questions one person cannot answer manually forever.

Eventually, if manual iteration is too slow, the domain expert has to help architect the learning system itself. The question shifts from 'how do I fix this output?' to 'how do we build a product that discovers this class of failure and improves from it?'

What this means for Mutant Company

For physical AI, media intelligence, and enterprise lab work, domain expertise cannot be bolted on after the prototype. The domain expert has to be close to the operating environment from the beginning.

In an industrial pilot, that might mean the maintenance lead, line supervisor, safety owner, or robotics engineer who knows which signals matter and which alerts will be ignored. In media, it might be the producer, archivist, editor, or newsroom operator who understands what useful retrieval or automation actually feels like.

Our bias is to build domain-native teams around each serious pilot: a principal domain expert, a product-minded engineer, and a system for turning judgment into measurable improvement. That is how frontier technology becomes operational capability instead of theater.